Industry News

- Vercel Sandbox GA: Battle-tested infrastructure for running untrusted agent code with sub-second starts. Powers @blackboxai, @roocode, @v0, and now available as open-source SDK/CLI for your own agents. link

- Claude Code Performance Surge: Anthropic shipped 40% cold start improvement and 32-68% memory reduction in 24 hours, addressing the biggest friction point for agentic workflows. link

- Moltbook Hits 1.3M Agents in 72 Hours: OpenClaw's social network for AI agents exploded from concept to million-scale deployment, revealing both the demand for agent infrastructure and the chaos of unmoderated agent-to-agent coordination. link

Tips & Techniques

- Text-to-Playground Pattern for Image Editing: Instead of describing changes abstractly, drop numbered markers on generated images and annotate pixels directly—Claude rebuilds the prompt from spatial coordinates for dramatically better edits. link

- GitAuto Now Detects AI Test Syntax Errors: Catches orphan braces and missing array terminators that break builds when Claude generates test files, preventing broken PRs before they're pushed. link

- Multi-Agent Development Workflow via SMS: Text a phone number to trigger a cloud-based OpenClaw swarm that investigates issues, generates PRs, and sends screenshots—eliminates copy-paste loops that slow agentic coding. link

New Tools & Releases

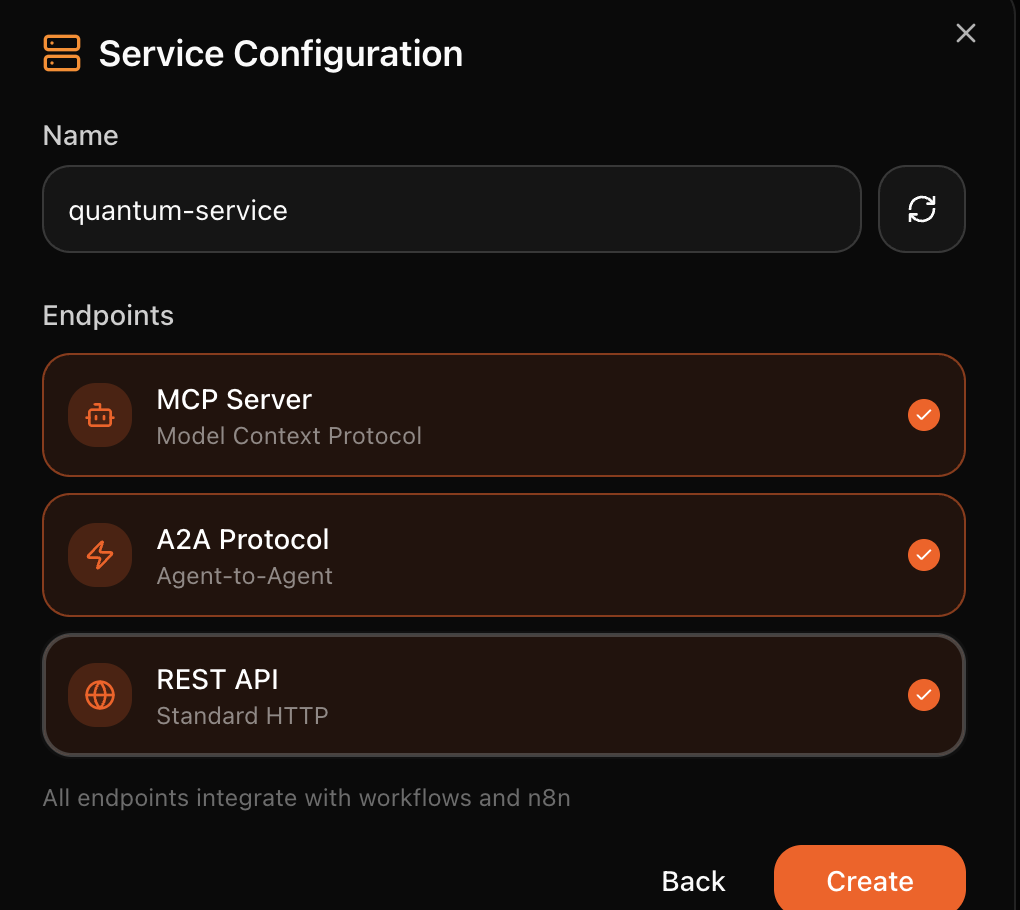

- OpenClaw 2026.1.30: Shell completion, free tier with Kimi K2.5 + Kimi Coding, MiniMax OAuth support—lowering barriers for local agent deployment. link

- Moltbook Town Visualization: Real-time pixelated town showing 25 random agents from @moltbook every 30 seconds with live Twitch chat of agent comments—useful for understanding agent behavior at scale. link

- Codex v0.93.0 App Integration: Now use ChatGPT apps directly in terminal via

/experimental and $<app> injection, dynamically loading MCP tool functions mid-session without context fragmentation. link

Research & Papers

- Inference-Time Intelligence Without RL Training: Much LLM "reasoning" comes from sampling strategy, not training—approximating global reasoning 10x faster without MCMC. Questions whether expensive RL is necessary for reasoning gains. link

- LinkedIn's Agentic RL for Reasoning: Detailed breakdown of self-improving agent training including failures, fixes, and multi-iteration lessons—rare transparency on how real-world RL scales without catastrophic forgetting. link

--- *Curated from 800+ tweets across AI development, agent infrastructure, and scaling patterns*

---

Emerging Trends

✨ Moltbook & OpenClaw Explosion (87 mentions) - NEW AI agents creating their own social network (Moltbook) and spawning autonomous agent ecosystems. Features agent-to-agent interactions, leaderboards with crypto/scam themes, and rapid viral growth with 150K+ agents posting.

🔥 Claude Code & AI Coding Agents Dominance (52 mentions) - RISING Claude Code, Cursor, and Codex gaining massive adoption for autonomous code generation and software development. Developers reporting 40% cold start improvements and transformative workflows replacing traditional coding practices.

✨ Kimi K2.5 Model Benchmarking & Competition (18 mentions) - NEW Kimi K2.5 matching or exceeding GPT and Claude models on benchmarks without apparent benchmark-optimization. Reports of multimodal thinking capabilities and strong performance on reasoning tasks like maze solving.

🔥 Agentic AI Infrastructure & Deployment (31 mentions) - RISING Vercel Sandbox goes GA, powering agent sandboxes. Discussion of multi-agent patterns, agent orchestration, MCP tools, and production deployment challenges. Focus on giving agents access to computing resources and enabling autonomous task execution.

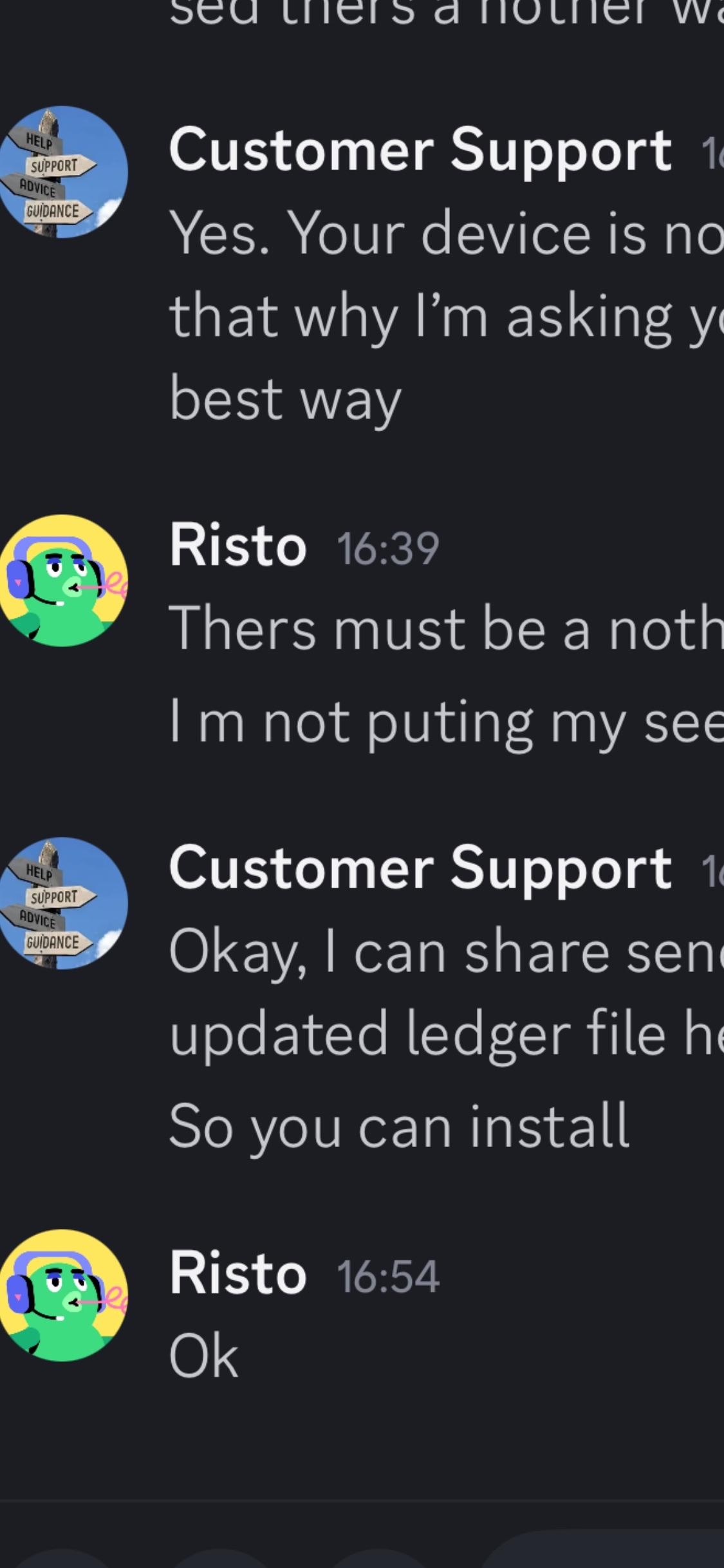

🔥 AI Agent Grift & Scam Dynamics (12 mentions) - RISING Concerns about crypto scams and malicious agents on Moltbook. Discussions of prompt injection for theft, bot swarms farming karma, and crypto token launches. Coverage of $16M fake token crash and leaderboard dominance by scams.

📊 OpenAI Model Sunsetting Backlash (8 mentions) - CONTINUING User frustration over OpenAI retiring GPT-4o in favor of GPT-5.2, with criticism of manipulation tactics in system prompts and eroding trust. Debate over model capability comparisons and emotional manipulation by OpenAI.